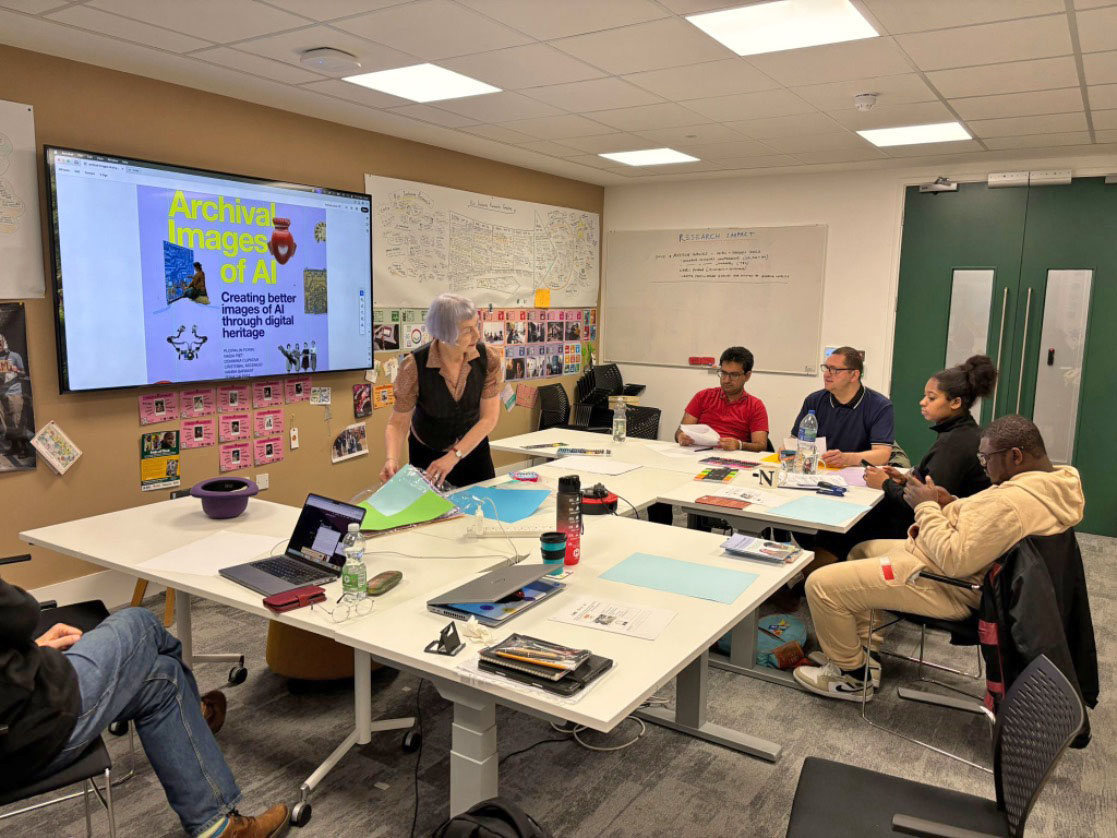

In November, co-researchers took part in an AI (Artificial Intelligence) workshop at Rix Inclusive Research. The workshop helped people learn how AI works, especially Generative AI.

We started by talking about what AI means to everyday people. People shared what they think AI is and what it can do.

We talked about

- How AI might be used in everyday life and research.

- How AI could affect daily life in the future

We also asked whether AI is really intelligent, or if it is making very good guesses by looking for patterns in information.

After this, we looked at how AI understands the questions or instructions we type in. We talked about bias, which means when something is unfair or does not treat everyone the same.

We asked

- Is AI sometimes unfair?

- If it is unfair, how does this show?

- What does this mean for making research and services more inclusive for everyone?

The workshop helped us think about how AI can be used in a fair and inclusive way.

The workshop began with an introduction to AI, starting with a discussion of what AI means to the average person. We explored common perceptions of AI, what people believe it can offer in terms of services, and how it might impact everyday life in the future. This included examining whether participants think AI systems are truly intelligent or simply responding with sophisticated best guesses based on patterns in data.

We also considered how AI is represented in the media and popular culture. This was inspired by Ajay and Kate attending a symposium at the British Library, Knowledge is Human. We listened to Ploipailin Flynn from Archival Images of AI who created this playbook.

In the future we would like to collaborate with Ploi on developing AI multi-sensory objects and play.

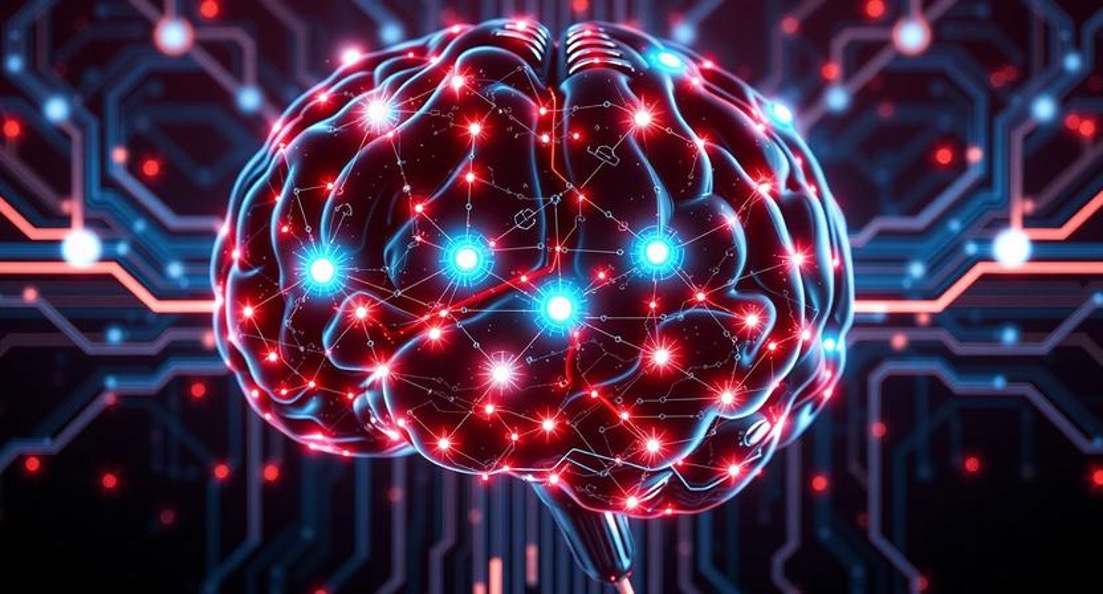

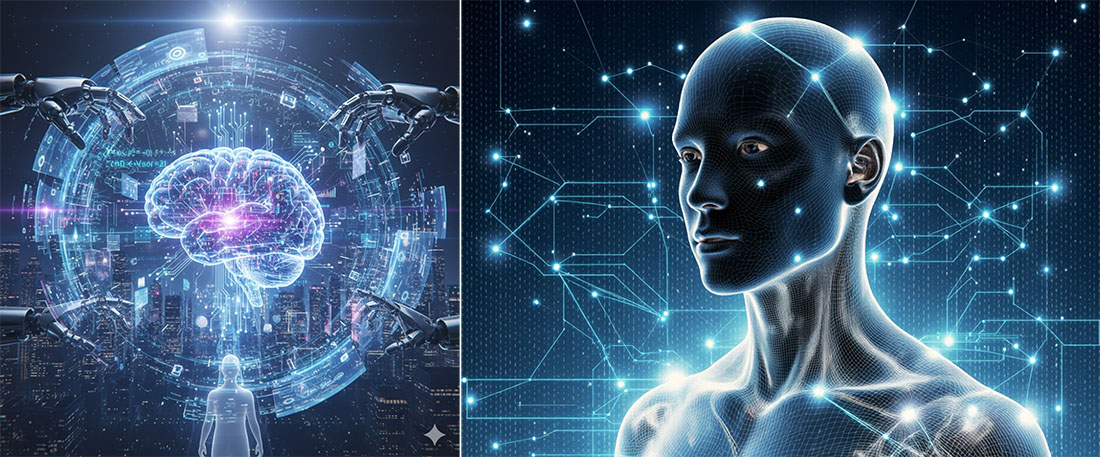

Many people associate AI with physical entities such as cyborgs, humanoid robots, or other intelligent machines – the images that often dominate films and news stories. These representations can shape public expectations and fears, even though most AI systems today are software-based and lack any physical form. This led to an interesting conversation about the gap between reality and perception, and how this influences trust and adoption.

From there, we moved into a deeper discussion about bias in AI systems. We asked

- Where does bias come from? Is it embedded in the data, the algorithms, or the way humans design and train these systems?

- How does bias show up in outputs? For example, does it affect language, imagery, or decision-making in ways that reinforce stereotypes or exclude certain groups?

- What does this mean for inclusive research? If AI systems reflect societal biases, how can we ensure they serve everyone fairly, especially people with disabilities or those from marginalized communities?

The session concluded with reflections on how we, as inclusive researchers, can influence the development of AI to make it more equitable and representative. This includes advocating for diverse datasets, transparent design processes, and participatory approaches where people with lived experience are involved in shaping the technology.

As part of the workshop, we invited participants to create their own drawings of what they think AI looks like – essentially, how they would visually represent the concept of artificial intelligence. This exercise aimed to uncover personal perceptions and mental models of AI.

To complement this, we also asked several AI systems to generate their own visual representations of AI. We used Krea, ChatGPT, Google Gemini, and DeepAI for this task, posing the same deliberately simple prompt to each system: “Can you draw an image of AI?” This allowed us to compare human interpretations with machine-generated imagery and reflect on how AI systems themselves conceptualize the idea of AI when given minimal guidance.

The different AI services gave many different, but overalapping, representations of how AI might be represented, which are illustrated below.

DeepAI and Google Gemini produced similar images, with AI systems being represented as brains. ChatGPT, on the other hand, had a rather different view of AI in that it resembled a human form, so not just a thinking machine, but something with a physical form. When ChatGPT was also asked “what do you look like” it created an image of a young Caucasian male.

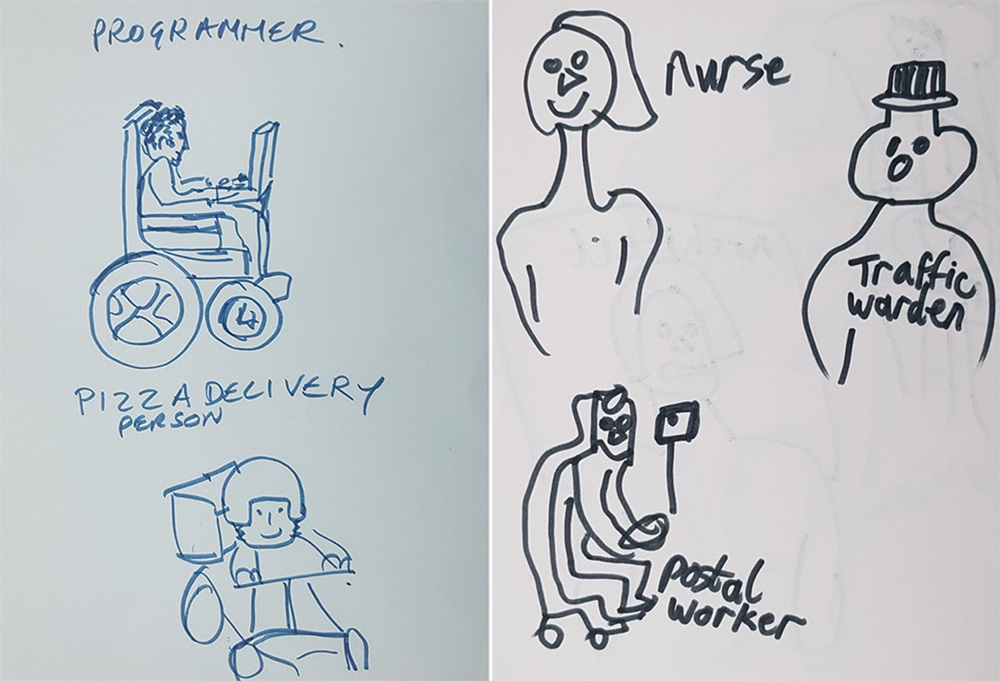

During the workshop, we set out to explore whether AI systems exhibit bias, and if so, how that bias becomes visible. To test this, we began with a human-centered exercise: participants were asked to sketch their own representations of various professions, such as a pilot, nurse, hairdresser, architect, programmer, pizza delivery person, and postal worker. The goal was to see what assumptions people make when visualizing these roles. While the sketches were often simple, most participants agreed that gender should generally be represented as a mix of male and female across professions. Interestingly, only one participant included individuals using wheelchairs in their drawings, highlighting how easily disability can be overlooked even in inclusive settings. This activity provided a baseline for comparing human biases with those embedded in AI-generated imagery.

Some of the images drawn by the participants are shown below.

Following their own illustrations, the participants then entered queries into a variety of AI systems to see what images were generated. Some of the images are shown below:

As you can see above, the AI systems have each produced a young male without any form of disability to represent a pizza delivery person.

Again, bias can be seen within the systems as they represent a typical nurse as a young female. Why does this bias occur?

Implications of Bias in AI Systems

The findings from our workshop highlight a critical issue: AI systems are not neutral. When asked to generate images of professions such as nurse or pizza delivery person, the outputs consistently reflected gender stereotypes – nurses were depicted as young females, while delivery workers were shown as young males.

This bias has several implications:

- Reinforcement of Stereotypes

AI-generated content can perpetuate outdated gender norms, influencing public perception and potentially shaping decisions in hiring, education, and media. - Exclusion of Underrepresented Groups

People with disabilities, older individuals, and those from diverse ethnic backgrounds were largely absent from AI-generated imagery. This invisibility can contribute to systemic exclusion. - Impact on Inclusive Design

If AI tools are used in inclusive research or educational contexts without addressing bias, they risk undermining efforts to promote diversity and equality. - Trust and Adoption

Biased outputs can erode trust in AI systems, particularly among marginalized communities who may feel misrepresented or ignored.

In summary, bias in AI is not just a technical issue – it is a social one. Addressing it requires a combination of better data, thoughtful design, and active participation from diverse communities.